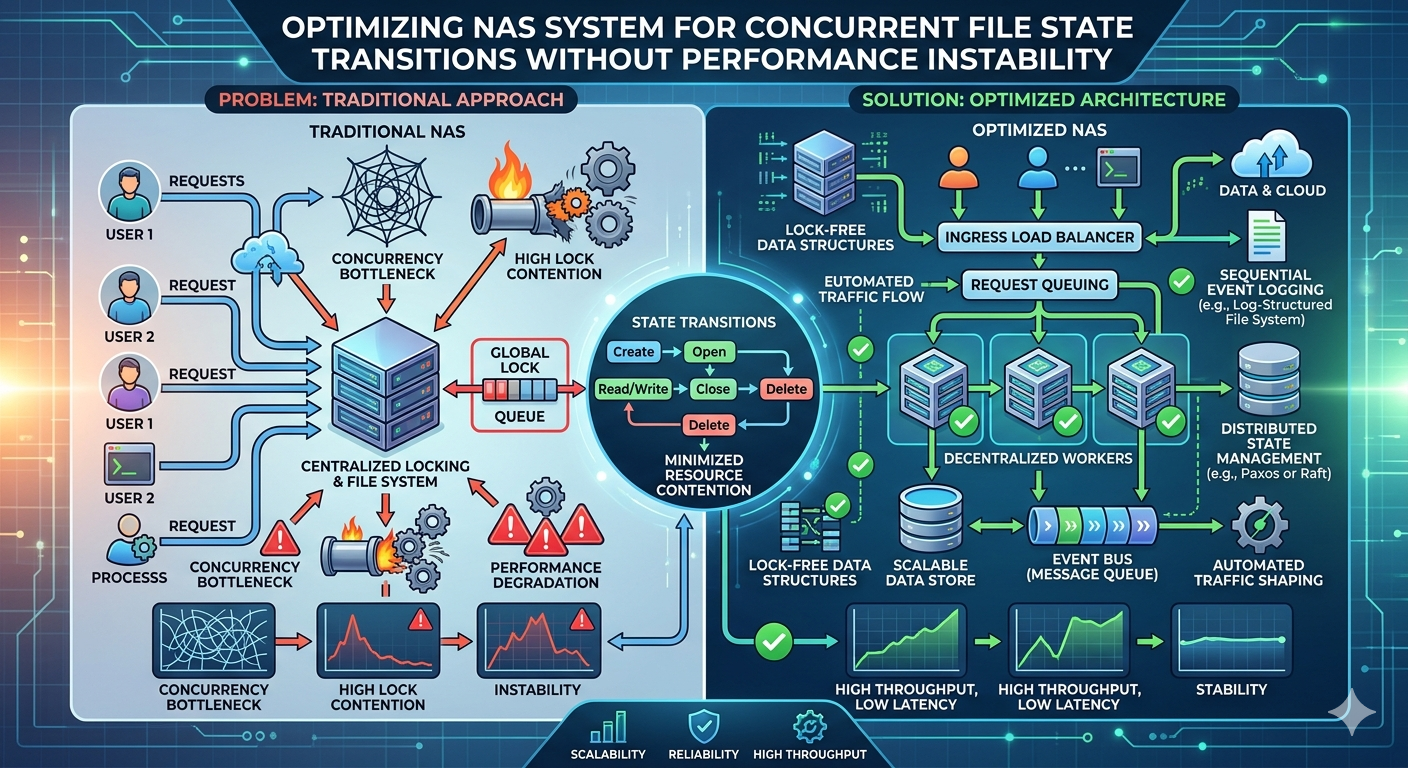

Handling thousands of simultaneous file operations requires a robust architectural foundation. When multiple clients attempt to read, write, or modify the same set of files, the underlying storage architecture must resolve state transitions without creating a bottleneck. Concurrent file state transitions—such as opening, locking, modifying, and closing files—generate significant metadata overhead. If not managed correctly, this overhead cascades into severe performance instability, leading to increased latency and application timeouts.

Network-attached storage environments are particularly vulnerable to these bottlenecks. A standard Nas System processes file-level protocols like NFS or SMB, which inherently carry heavy metadata payloads. As the number of concurrent connections scales, the CPU and memory resources required to track file locks and access permissions can overwhelm the storage controller. This creates a scenario where the storage array is busy managing file states rather than serving actual read and write requests.

This guide details the technical mechanisms behind concurrent file contention and outlines systematic methods for optimizing an Enterprise nas. By understanding how file states are tracked, applying advanced metadata caching, and strategically utilizing protocols, system administrators can eliminate performance degradation and maintain high throughput during peak operational loads.

Understanding File State Contention

At the core of any file-based storage architecture is the file system hierarchy. Every file transition requires the storage controller to update inodes, verify access control lists (ACLs), and manage file locks to ensure POSIX compliance. When hundreds of client machines access a centralized Nas System simultaneously, the controller must serialize these locking requests.

Serialization prevents data corruption by ensuring that two clients cannot overwrite the same block of data simultaneously. However, this serialization forces requests into a queue. As the queue grows, latency increases exponentially. This phenomenon is known as lock contention. In environments handling high-frequency transactional data or virtual machine hosting, lock contention rapidly degrades total system performance.

Transitioning to block-level protocols can mitigate some of these file-system overheads. An ISCSI NAS provisions storage as logical unit numbers (LUNs) rather than a shared file system hierarchy. Because the file system is managed by the client operating system rather than the storage array, the storage controller is relieved of the burden of tracking individual file states and locks.

Architectural Bottlenecks in an Enterprise NAS

Scaling a storage environment introduces distinct engineering challenges. An Enterprise nas must support massive concurrency, high availability, and data replication. Each of these features adds compute overhead. When analyzing performance instability during concurrent file transitions, administrators must evaluate three primary bottlenecks:

Metadata Processing Limits

Metadata operations often account for more than half of all storage traffic in a high-concurrency environment. Checking permissions, updating timestamps, and allocating blocks require minimal network bandwidth but exact a heavy toll on controller CPU cycles. If a Nas System lacks a dedicated metadata processing tier, these operations share resources with actual data payloads, causing unpredictable latency spikes.

Cache Thrashing

Storage arrays utilize RAM and fast flash media to cache frequently accessed data. During periods of high concurrency, multiple clients requesting different data sets can cause the cache to fill and empty rapidly—a condition known as cache thrashing. When the cache is effectively neutralized by thrashing, the Enterprise nas is forced to serve requests directly from slower, capacity-tier drives, severely impacting performance.

Network Protocol Overhead

Standard file protocols require continuous back-and-forth communication between the client and the server to confirm state changes. This "chattiness" consumes network bandwidth and adds latency. Implementing an ISCSI NAS architecture reduces this overhead. By encapsulating SCSI commands over IP networks, an ISCSI NAS streams block data more efficiently, reducing the constant acknowledgement packets required by SMB or NFS file locking mechanisms.

Strategies for Stabilizing Storage Performance

Resolving performance instability requires a combination of hardware provisioning, protocol selection, and software configuration. The objective is to decouple metadata processing from the primary data path and streamline how the storage controller handles concurrent requests.

Implementing Distributed Metadata Architecture

Advanced storage platforms address metadata bottlenecks by distributing the workload across multiple nodes. Instead of relying on a single monolithic controller to track every file transition, a scale-out Enterprise nas divides the file system tree. Different nodes take ownership of specific directories. This horizontal scaling ensures that concurrent state changes in different parts of the file system do not queue behind one another, drastically reducing lock contention.

Utilizing Non-Volatile Memory Express (NVMe) Caching

To prevent cache thrashing during concurrent transitions, administrators should deploy NVMe-based read and write caches. NVMe drives bypass the legacy SAS/SATA host bus adapters, connecting directly to the PCIe bus. This provides the Enterprise nas with the microsecond latency required to absorb massive influxes of file state changes. Configuring a dedicated NVMe tier specifically for intent logging and metadata caching ensures the controller never waits on physical disk alignment.

Leveraging Block Storage for Virtualized Workloads

Not all workloads are suited for file-level sharing. Applications that require intensive, concurrent state transitions—such as relational databases and virtual machine hypervisors—should be migrated to an ISCSI NAS. By presenting block storage to the hypervisor, the ISCSI NAS allows the hypervisor's clustered file system (like VMFS) to handle locking mechanics natively. The storage array simply executes rapid block reads and writes, completely sidestepping the overhead of tracking individual file states.

Frequently Asked Questions

How does a Nas System handle file locking during concurrent access?

A standard Nas System utilizes opportunistic locks (oplocks) or file leases to manage concurrent access. The storage controller grants a client permission to cache file data locally. If another client requests access to the same file, the controller recalls the lock, forcing the first client to flush its cache back to the array. This ensures data consistency but can cause latency if thousands of locks are recalled simultaneously.

What differentiates an Enterprise nas from consumer storage?

An Enterprise nas is engineered with dual-active controllers, redundant power supplies, and specialized software for automated tiering, snapshotting, and synchronous replication. More importantly, it features operating systems designed to handle massive metadata loads and concurrent connections without kernel panics or processor bottlenecks.

When should I choose an ISCSI NAS over an NFS deployment?

You should deploy an ISCSI NAS when hosting applications that manage their own file systems, such as SQL databases, Microsoft Exchange servers, or VMware ESXi environments. Because an ISCSI NAS operates at the block level, it avoids the heavy metadata and locking overhead inherent to NFS, resulting in significantly lower latency for transactional workloads.

Achieving Stable Storage Performance

Eliminating performance instability during concurrent file transitions requires a systematic evaluation of your storage infrastructure. Begin by utilizing performance monitoring tools to isolate your latency sources; determine whether your bottlenecks are tied to metadata processing, network protocol overhead, or cache saturation.

If metadata is the primary issue, investigate scale-out architectures or allocate dedicated NVMe storage pools exclusively for intent logs. For transactional databases and virtual machines experiencing high lock contention, transitioning those specific volumes to an ISCSI NAS will yield immediate latency reductions. By aligning your protocols and caching strategies with your specific workload demands, you can guarantee consistent, high-speed data access across your entire organization.