Kubernetes was fundamentally architected to manage ephemeral, stateless workloads. When a pod failed, the orchestrator simply spun up a replica. Data persistence was deliberately offloaded to external infrastructure. However, modern operational requirements dictate that databases, message queues, and other stateful applications run natively within Kubernetes clusters. This shift creates a fundamental friction: container storage is transient, but stateful applications demand data longevity.

To resolve this architectural gap, administrators require robust storage architectures. Among the most effective solutions are Nas Systems, which seamlessly bridge the gap between ephemeral compute and persistent data requirements. By attaching network-based storage to containerized workloads, infrastructure teams can ensure that data outlives the pods that generate it.

The Evolution of Container Storage

In the early iterations of container orchestration, deploying stateful applications was an operational risk. Local host-path volumes tied pods to specific physical nodes, defeating the purpose of a distributed, highly available scheduler. If a node suffered a hardware failure, the pod could not be rescheduled elsewhere without losing its attached data.

To circumvent this, organizations began adopting external block storage. While block storage provides excellent performance for single-pod databases, it introduces severe limitations for shared workloads. Block volumes generally support ReadWriteOnce (RWO) access, meaning only one node can mount the volume at a given time. For applications that require multiple pods across different nodes to access the same files simultaneously, block storage is fundamentally inadequate.

The Role of Nas Systems in Kubernetes

Integrating Nas Systems into Kubernetes environments allows organizations to decouple storage from compute layers efficiently. Network Attached Storage relies on standard file-sharing protocols, such as NFS or SMB, making it inherently suitable for network-based container access.

When multiple pods require concurrent read and write access to the same dataset—a requirement denoted as ReadWriteMany (RWX) in Kubernetes—Nas Systems provide the necessary file-locking and concurrent access mechanisms. This capability is critical for content management systems, machine learning pipelines, CI/CD workloads, and shared web server directories. By leveraging Nas Systems, developers can deploy multi-replica deployments that write to a single, unified file system, ensuring data consistency across the entire application architecture.

Scaling Operations with Enterprise nas

While basic file servers can handle minor deployments or development environments, scaling production stateful applications requires an Enterprise nas architecture. Enterprise nas platforms deliver high availability, automated snapshots, and synchronous replication across disparate geographic locations.

For a stateful application running in production, storage downtime translates directly to total operational failure. An Enterprise nas mitigates this by abstracting the physical disks into highly available, redundant storage pools. This ensures that if a localized storage controller or disk fails, the Kubernetes cluster experiences zero disruption. Furthermore, deploying Enterprise nas arrays allows storage administrators to leverage StorageClasses and PersistentVolumeClaims (PVCs) effectively. This enables dynamic provisioning of file shares based on real-time application demand, eliminating the need for manual LUN masking or volume creation.

An Enterprise nas also provides advanced data reduction technologies such as deduplication and compression. In a Kubernetes environment where hundreds of containers might share similar base images or generate repetitive logs, an Enterprise nas can significantly reduce the physical storage footprint, optimizing infrastructure costs without sacrificing performance.

The Critical Importance of NAS Security

A paramount concern when connecting external storage to containerized workloads is NAS Security. Because Nas Systems operate over network protocols, the data in transit and at rest must be rigorously protected. Traditional on-premises networks operated on a perimeter trust model, but the distributed, dynamic nature of Kubernetes requires a zero-trust approach to storage access.

Implementing comprehensive NAS Security involves utilizing encrypted NFSv4.1 traffic, strict Active Directory or LDAP integration for access control, and network segmentation. In a multi-tenant Kubernetes cluster, proper NAS Security prevents pod breaches from escalating into cluster-wide data compromises. Administrators must systematically address NAS Security by enforcing least-privilege principles at both the Kubernetes Role-Based Access Control (RBAC) layer and the storage export layer.

When an Enterprise nas is configured correctly, it verifies the identity of the requesting pod, node, or service account before granting mount permissions. Ignoring NAS Security exposes the organization to severe risks, particularly ransomware, unauthorized data exfiltration, or cross-tenant data corruption. Advanced NAS Security protocols also ensure that administrative access to the storage arrays is protected by multi-factor authentication and comprehensive audit logging.

Architecture and CSI Integration

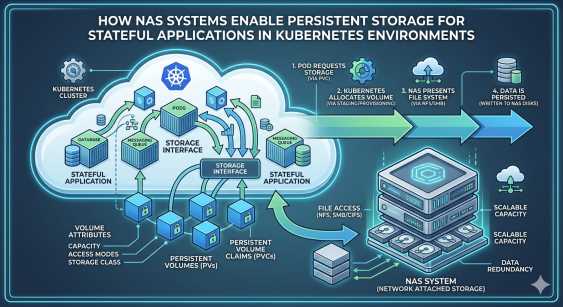

To fully capitalize on Nas Systems, infrastructure teams must utilize the Container Storage Interface (CSI). The CSI driver acts as the translation layer, allowing the Enterprise nas to communicate directly with the Kubernetes API.

When a developer requests a PersistentVolumeClaim, the CSI driver automatically provisions the volume on the backend Nas Systems, attaches it to the appropriate node, and mounts it into the pod. This automation removes manual administrative bottlenecks and aligns storage provisioning with the declarative nature of Kubernetes. Furthermore, the integration of Nas Systems through CSI drivers enables advanced data management operations natively through kubectl. Snapshots, clones, and volume expansion can be executed programmatically. If a stateful application requires scaling, the Enterprise nas handles the underlying storage capacity adjustments without requiring pod restarts or manual intervention.

Protecting stateful workloads requires a layered defense. Alongside robust NAS Security configurations, organizations must implement consistent, automated backup strategies. Because Nas Systems centralize the data outside the compute cluster, backup agents can capture snapshots at the storage layer without consuming CPU or memory resources within the Kubernetes worker nodes. This architecture ensures that even in the event of a catastrophic cluster failure or accidental namespace deletion, the data remains intact and recoverable.

Sustaining Stateful Workloads with Network Storage

Transitioning stateful applications into containerized environments requires infrastructure that guarantees data persistence, scalability, and strict access controls. By leveraging robust Nas Systems, organizations can satisfy the complex shared storage requirements of modern distributed workloads.

Deploying an Enterprise nas ensures high availability, data efficiency, and dynamic provisioning through seamless CSI integrations. Concurrently, prioritizing NAS Security protects critical data assets against internal and external threats in a highly dynamic container network. System administrators must systematically evaluate their current storage architectures, eliminate ephemeral data risks, and adopt secure network-attached solutions to fully realize the operational capabilities of Kubernetes.