Cache eviction storms present a critical threat to enterprise IT infrastructure. When thousands of concurrent requests hit a storage system simultaneously, the cache can quickly become overwhelmed. The system begins constantly swapping data in and out of memory. This leads to severe latency, degraded throughput, and potential system crashes. System administrators must architect environments that can handle sudden spikes in input/output operations without compromising overall performance.

Network Attached storage provides a robust framework for managing these high-concurrency workloads. Modern NAS storage solutions incorporate advanced caching mechanisms and distributed architectures specifically designed to prevent memory bottlenecking. By optimizing how active data is held and retrieved, these systems maintain stability even under extreme network loads.

This analysis will equip IT architects and systems engineers with the technical knowledge needed to protect their environments. We will examine the exact mechanics of cache eviction and explain how enterprise-grade storage systems resolve these complex concurrency challenges.

Understanding Cache Eviction Storms

To prevent system degradation, administrators must first understand how memory management fails under pressure. Caching exists to keep frequently accessed data close to the processing unit. This reduces the time required to fetch data from slower disk drives.

The Impact of High-Concurrency Access

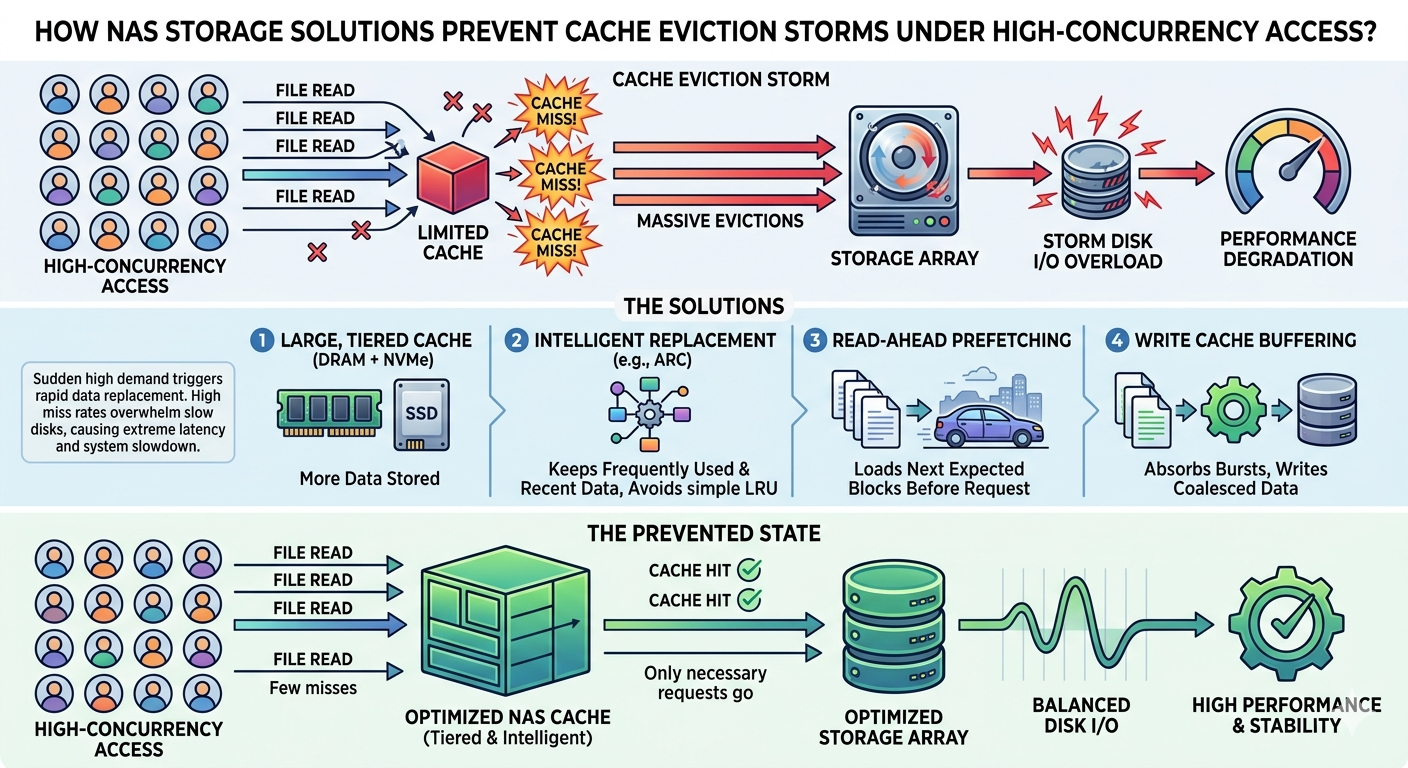

Problems arise when concurrency reaches critical thresholds. Hundreds or thousands of users might request different datasets simultaneously. The cache has a limited capacity. When it fills up, the system must evict older data to make room for new requests.

Under normal conditions, this process happens smoothly. Under high-concurrency access, however, the requests arrive faster than the system can process the evictions. The cache enters a state of constant churn. Data is evicted just milliseconds before it is requested again. The CPU spends more cycles managing the cache than serving actual user requests, causing system performance to collapse.

Recognizing the Warning Signs

Administrators can identify an impending cache eviction storm through several key metrics. A sudden drop in the cache hit ratio is the most obvious indicator. If the hit ratio falls below acceptable baseline levels, the system is serving too many requests directly from disk. Corresponding spikes in disk input/output (I/O) wait times and CPU utilization also signal that the memory management protocols are failing.

How NAS Storage Solutions Mitigate the Risk?

Upgrading the physical storage architecture is often the most effective way to eliminate these bottlenecks. Modern NAS storage solutions utilize sophisticated hardware and software integrations to keep memory stable.

Intelligent Tiering and Caching Algorithms

Basic storage arrays use simple algorithms like Least Recently Used (LRU) to manage memory. Enterprise Network Attached storage employs much smarter protocols. These systems analyze access patterns in real-time. They can differentiate between a sequential read operation and a random access request.

By understanding the context of the data request, NAS storage solutions can lock critical data in the cache. They prevent large, one-time sequential reads from flushing out the frequently accessed random data. Many systems also utilize multi-tier caching. They use high-speed RAM for the hottest data, NVMe solid-state drives for warm data, and spinning disks for cold storage. This tiered approach acts as a massive shock absorber during concurrency spikes.

Distributed Architecture in Network Attached Storage

Scale-out Network Attached storage further protects the cache through distributed architecture. Instead of relying on a single controller and a single pool of memory, a scale-out NAS distributes the workload across multiple nodes.

If one node experiences a surge in concurrent requests, the system automatically redirects traffic to other nodes with available cache capacity. This load balancing prevents any single cache from becoming overwhelmed. The distributed nature of the system ensures that cache eviction storms cannot cascade across the entire storage environment.

Implementing NAS for Optimal Performance

Purchasing the right hardware is only the first step. Proper configuration is essential to guarantee high availability.

Capacity Planning and Memory Allocation

Administrators must size the cache appropriately for their specific workloads. A general rule is to allocate enough high-speed cache to hold the active working set of data. If the working set regularly exceeds the available cache, eviction storms are inevitable. Engineers must analyze historical access logs to determine the true size of the working data set and provision the Network Attached Storage accordingly.

Tuning the Storage Environment

Storage protocols must be tuned to match the network infrastructure. Administrators should adjust block sizes and network transmission units to ensure data flows efficiently between the NAS storage solutions and the application servers. Enabling features like read-ahead caching for sequential workloads can also prevent unnecessary cache misses.

Securing High-Availability for Your Infrastructure

Maintaining consistent storage performance under high concurrency requires systematic planning and robust hardware. Cache eviction storms can paralyze an organization, but they are entirely preventable. By implementing intelligent tiering, scale-out architectures, and proper capacity planning, administrators can isolate their applications from memory thrashing.

Review your current cache hit ratios and disk latency metrics this week. If you observe consistent degradation during peak usage hours, it is time to evaluate your storage architecture. Consult with your hardware vendor to explore how modern NAS storage solutions can stabilize your data layer and secure high availability for your critical applications.