Data generation is growing at an unprecedented rate, forcing enterprise IT environments to rethink how they manage and retrieve information. Traditional storage architectures often struggle to keep up with the demands of modern applications, leading to bottlenecks that degrade overall system performance. To address these challenges, administrators are increasingly turning to advanced mechanisms within their infrastructure to optimize how files are written, stored, and retrieved.

At the core of this transformation are modern NAS solutions. Network-Attached Storage has evolved significantly from simple file-sharing repositories into highly sophisticated ecosystems capable of handling petabytes of unstructured data. However, simply adding more capacity to a network does not solve the fundamental issue of access speed. When millions of files reside on a single file system, locating and serving the right data instantaneously requires a highly systemic approach to data management.

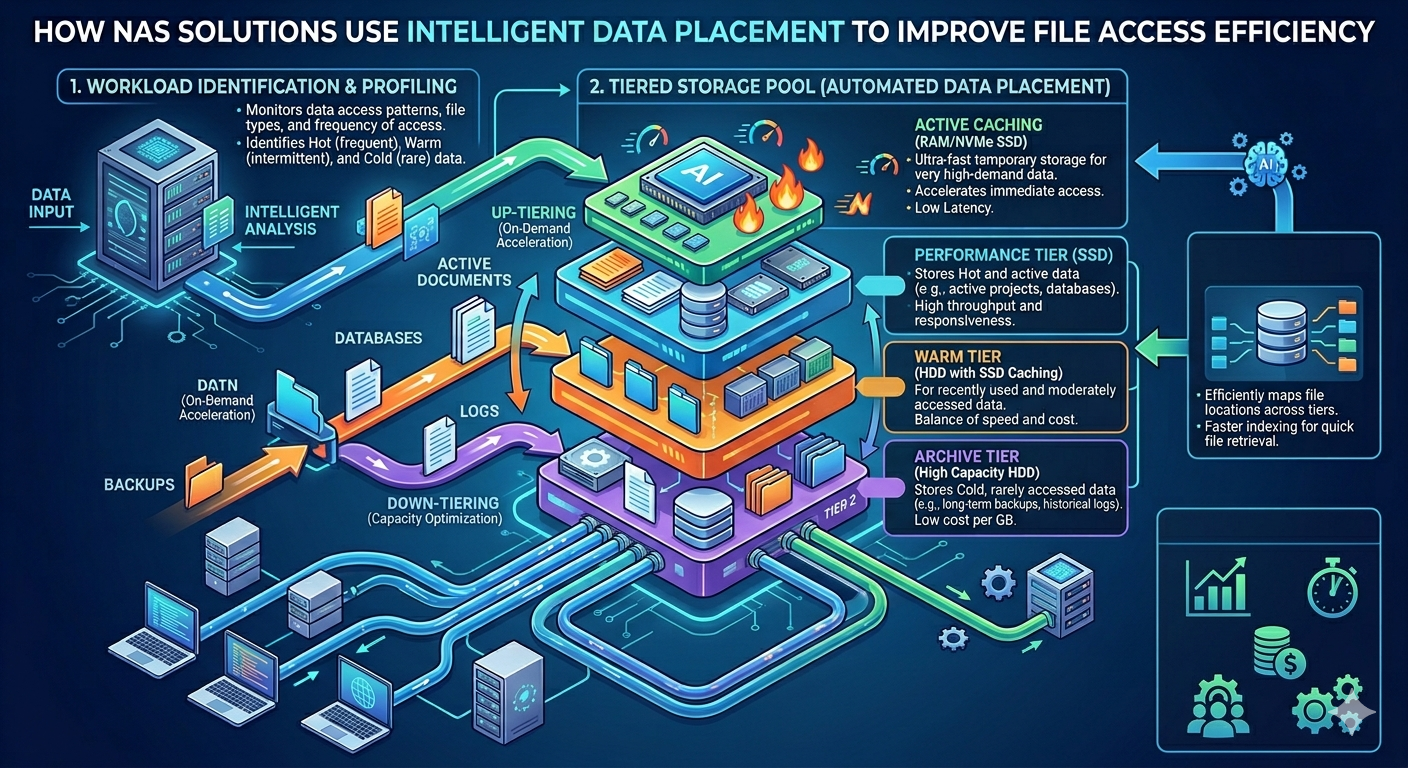

This is where intelligent data placement becomes critical. By leveraging machine learning algorithms and real-time usage analytics, intelligent data placement dynamically moves files across different storage tiers based on their access frequency and business value. This automated process ensures that highly active data remains on the fastest available media, while archive data is pushed to more cost-effective drives.

Understanding the mechanics behind this technology is essential for engineers and IT architects aiming to build scalable environments. By examining how NAS Storage systems analyze workloads, categorize data, and implement secure backup protocols like Immutable Snapshots for NAS, organizations can drastically improve their file access efficiency and secure their critical digital assets.

The Mechanics of Intelligent Data Placement

Intelligent data placement operates on the principle that not all data holds equal value at any given moment. A file created and accessed heavily today might not be opened again for months. Modern NAS solutions monitor these access patterns at a granular level, analyzing metadata to make automated decisions about where specific blocks of data should reside.

Hot, Warm, and Cold Data Tiering

The foundation of intelligent data placement is automated storage tiering. High-performance NAS Storage arrays typically consist of a mix of storage media, including NVMe drives, standard Solid State Drives (SSDs), and traditional Hard Disk Drives (HDDs).

When data is categorized as "hot"—meaning it is accessed continuously by applications or users—the system automatically pins it to the NVMe or SSD tier. This guarantees the lowest possible latency and the highest throughput. As the data ages and becomes "warm" or "cold," the system's algorithms migrate it to high-capacity HDDs. This continuous, background migration happens transparently to the end-user, ensuring that the fastest storage media is always reserved for the workloads that need it most.

Predictive Analytics and Metadata Management

Beyond simple reactive tiering, advanced NAS solutions utilize predictive analytics to anticipate data access requests. By evaluating historical access trends, the system can pre-fetch data into the high-performance cache before a user or application even requests it.

Furthermore, efficient metadata management plays a vital role in file access efficiency. Metadata—the data about the data—contains crucial information such as file size, creation date, and access permissions. By separating metadata from the actual file data and storing it exclusively on high-speed flash memory, NAS Storage systems can execute directory browsing and file searches in fractions of a second, drastically reducing the overhead associated with file retrieval.

Security and Reliability in Automated Environments

Efficiency cannot come at the expense of data integrity or security. As files are dynamically moved across different tiers, the storage infrastructure must guarantee that data remains protected against corruption, hardware failure, and malicious attacks such as ransomware.

Integrating Immutable Snapshots for NAS

One of the most effective methods for securing data in these dynamic environments is the implementation of Immutable Snapshots for NAS. A snapshot is a point-in-time copy of the file system. When these snapshots are made immutable, they are locked at the storage level and cannot be altered, encrypted, or deleted by any user, administrator, or application for a specified retention period.

Intelligent data placement systems work synchronously with Immutable Snapshots for NAS to ensure that security protocols do not hinder performance. Because snapshots typically capture only the changed blocks of data rather than the entire file, they require minimal storage space. The system intelligently places these snapshot blocks on appropriate storage tiers.

If a ransomware attack occurs, administrators can utilize Immutable Snapshots for NAS to instantly revert the file system to a pristine, uninfected state. This rapid recovery capability is a defining characteristic of resilient NAS solutions, providing business continuity without compromising the high-speed file access required for daily operations.

Cost-Effective Resource Allocation

Implementing intelligent data placement within NAS Storage environments yields significant financial benefits. Flash storage is expensive, and provisioning an entire data center with NVMe drives is cost-prohibitive for most organizations.

By intelligently pushing cold data to cheaper, high-capacity drives, enterprises can optimize their hardware investments. Organizations achieve the performance profile of an all-flash array for their most critical applications while paying the per-gigabyte cost of traditional spinning disks for their archival data. This automated lifecycle management eliminates the need for manual data migrations, freeing up IT personnel to focus on strategic initiatives rather than routine storage maintenance.

Moreover, integrating Immutable Snapshots for NAS into this tiered architecture prevents the need for excessive physical hardware redundancies. Organizations can maintain robust disaster recovery postures within their existing NAS Storage footprints, maximizing the return on investment for their storage infrastructure.

Building a Resilient Data Infrastructure

As enterprise workloads become increasingly complex, the reliance on high-performance file sharing will only continue to grow. Administrators must deploy infrastructures capable of autonomously managing data placement to prevent access bottlenecks and optimize hardware utilization.

Modern NAS solutions provide the necessary framework to achieve this. Through predictive analytics, automated tiering, and rigorous metadata management, these systems deliver optimal performance tailored to specific workload demands. Concurrently, features like Immutable Snapshots for NAS provide the unyielding security required to protect corporate data against modern digital threats.

By embracing these intelligent storage mechanisms, IT departments can build highly efficient, secure, and scalable architectures capable of supporting the next generation of enterprise applications.