Logging and telemetry systems form the backbone of modern IT observability. These systems generate massive volumes of sequential and random write data, placing extreme stress on storage infrastructure. When building these environments, engineers must design storage architectures capable of handling continuous, high-volume data ingestion without introducing latency. Traditional storage arrays often struggle under these specific conditions, resulting in bottlenecks that can compromise system monitoring.

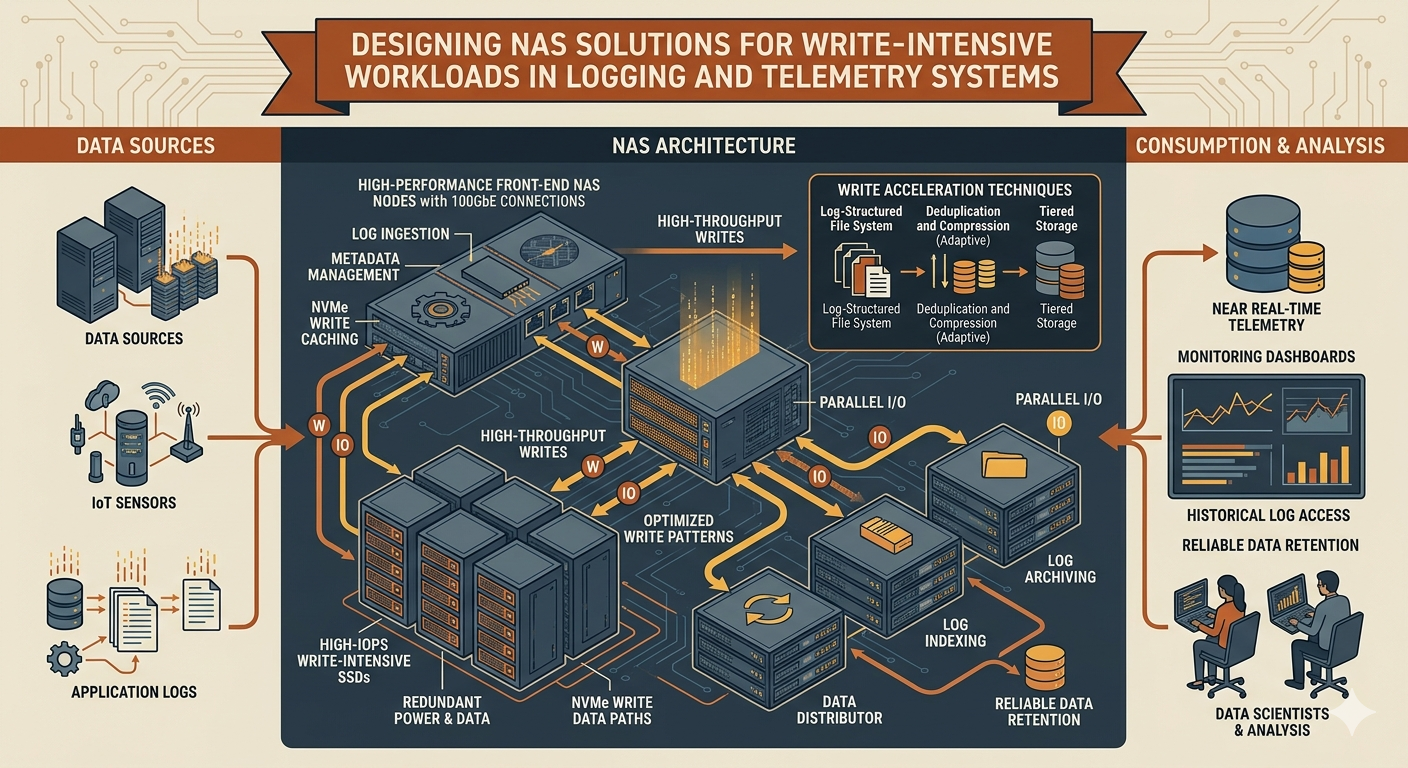

Implementing specialized NAS solutions provides the necessary file-level access and scalability required by distributed logging frameworks. Furthermore, integrating cloud-native block storage, such as Azure disk storage, into hybrid architectures allows organizations to achieve high IOPS and throughput. This guide examines the technical requirements and architectural strategies for deploying robust NAS Storage environments tailored to write-heavy telemetry workloads.

Understanding Write-Intensive Workloads

Logging frameworks, such as Elasticsearch, Splunk, or custom time-series databases, operate differently than standard transactional databases. They ingest data streams continuously from thousands of endpoints, microservices, and network devices. This creates a highly concurrent, write-heavy I/O profile.

The Mechanics of Logging and Telemetry

If the underlying storage cannot commit data to disk fast enough, the entire ingestion pipeline stalls, leading to dropped logs and blind spots in system observability. Designing effective NAS solutions requires a deep understanding of these specific I/O patterns. Telemetry data is typically appended to large files or written as numerous small files in rapid succession. The storage system must handle metadata updates efficiently, as the constant creation and modification of files generate significant metadata overhead. Engineers must account for both the payload data and the metadata when provisioning storage resources to prevent controller saturation.

Key Architectural Considerations for NAS Storage

When deploying storage for logging, hardware choices and protocol selections dictate overall system performance. A misaligned protocol can throttle the fastest solid-state drives.

Throughput and IOPS Requirements

Write operations require incredibly low latency. A storage bottleneck directly impacts the ingestion buffer. When evaluating NAS solutions, system architects must calculate the required write IOPS and sustained throughput based on peak ingestion periods. Flash-based storage, specifically NVMe over Fabrics (NVMe-oF), significantly reduces the latency associated with traditional SAS and SATA interfaces.

Additionally, the file sharing protocol matters immensely. NFSv4.1 and SMB 3.0 offer multipathing and improved parallel I/O capabilities. These advanced protocols are critical for distributing write loads across multiple network interfaces, preventing any single connection from becoming a chokepoint.

Scaling Capacity and Performance

Telemetry data grows exponentially. A system that handles 500 gigabytes of daily ingestion today might face 5 terabytes per day within a year. Scale-out NAS Storage architectures address this by allowing administrators to add individual nodes to a cluster. This increases both capacity and compute resources linearly. This distributed approach prevents the controller bottlenecks common in legacy scale-up architectures, ensuring that performance scales identically alongside storage space.

Data Protection and Redundancy

Logging systems contain critical security and operational data. Losing this information due to hardware failure presents massive compliance risks. However, traditional RAID configurations introduce a distinct write penalty. Every write operation requires calculating and writing parity data, which can severely degrade performance in a write-intensive environment. To mitigate this, engineers must select advanced erasure coding techniques or localized RAID 10 configurations that offer an optimal balance of high-speed performance and fault tolerance.

Integrating Cloud Capabilities: Azure Disk Storage

Modern logging systems rarely exist entirely within a single local data center. They often span on-premises environments and public clouds. Microsoft Azure provides infrastructure that can host virtualized NAS appliances or serve as the underlying block storage for custom scale-out clusters.

Hybrid and Cloud-Native Approaches

For organizations deploying log aggregators in the cloud, configuring the underlying virtual machines with the correct block storage is paramount. Attaching high-performance Azure disk storage to compute instances ensures that the virtualized NAS Storage can sustain the necessary write speeds. Azure Premium SSDs and Ultra Disks provide configurable IOPS and throughput, allowing engineers to provision exact performance metrics based on the current ingestion rate.

By utilizing Azure disk storage, infrastructure teams can effectively decouple performance from capacity. They can adjust disk metrics dynamically as telemetry demands fluctuate during peak operational hours, ensuring cost-efficiency without sacrificing data ingestion speed.

Best Practices for Designing Robust NAS Solutions

Optimizing the architecture requires careful configuration of both the storage media and the underlying network layer. Software configurations play a massive role in hardware utilization.

Caching and Tiering Strategies

Write caching mechanisms absorb sudden bursts of telemetry data. Implementing a non-volatile RAM (NVRAM) or high-speed NVMe tier for the initial write acknowledgement reduces application latency. Once the data is safely committed to the fast tier, the system systematically destages it to a larger, more cost-effective capacity tier. This automated tiering is a core feature of enterprise-grade NAS Storage. It ensures that the high cost of flash memory is strictly reserved for active ingestion, while historical log data resides on cheaper, high-capacity mechanical drives or standard SSDs.

Network Infrastructure Optimization

A storage array operates only as fast as the network connecting it to the compute nodes. Write-intensive workloads require high-bandwidth, low-latency networking environments. Implementing 25GbE or 100GbE network fabrics, alongside features like Remote Direct Memory Access (RDMA), minimizes CPU overhead and bypasses the operating system kernel entirely. This direct memory access is particularly beneficial when deploying virtualized NAS solutions on top of cloud infrastructure like Azure disk storage, ensuring the network stack does not become the limiting factor for high-speed data ingestion.

Securing the Future of Telemetry Data Infrastructure

Building storage for logging and telemetry requires a systematic approach to hardware selection, protocol optimization, and network topology. Standard file servers consistently fail under the sustained write pressure of modern observability tools. Organizations must deploy engineered architectures that prioritize write throughput, IOPS, and linear scalability.

Whether deploying physical appliances in a local data center or building virtualized clusters backed by cloud block storage, the fundamental principles remain identical. Evaluate the exact I/O profile, implement aggressive caching mechanisms, utilize parallel network protocols, and meticulously plan for exponential data growth. By adhering to these strict engineering practices, infrastructure teams can guarantee that their observability platforms remain highly available, performant, and ready to securely ingest the next generation of operational telemetry data.