Enhancing Nas System for Efficient Handling of Concurrent File State Changes Without Lock Contention

Modern storage environments operate in a state of constant concurrency. Multiple users, applications, and automated processes often interact with the same datasets at the same time. This creates frequent file state changes—such as updates, renames, permission modifications, and metadata adjustments—that must be handled efficiently to maintain system stability. When not managed properly, these concurrent operations can lead to lock contention, where multiple processes compete for access to the same resources, significantly reducing performance.

In advanced NAS solutions, managing concurrency is not just about allowing multiple access requests—it is about ensuring those requests do not interfere with each other. This becomes especially critical in Enterprise NAS environments, where workloads are large, distributed, and continuously evolving.

Understanding Concurrent File State Changes

A file state change refers to any modification in a file’s metadata or content state. In a concurrent environment, these changes may occur simultaneously across multiple clients or nodes. Examples include:

Simultaneous edits to shared files

Permission updates during active access

Metadata refresh triggered by background indexing

Automated system processes modifying file attributes

When these operations overlap, the system must ensure consistency while avoiding conflicts. This is where lock mechanisms are traditionally used.

However, excessive reliance on locking can create bottlenecks. If too many processes wait for locks to be released, the system experiences delays, reduced throughput, and in extreme cases, operational stalls.

The Problem of Lock Contention

Lock contention occurs when multiple processes attempt to acquire the same lock at the same time. In a NAS System, this is particularly problematic because file systems are inherently shared resources.

Common causes of lock contention include:

High-frequency metadata updates

Centralized locking mechanisms

Poorly distributed file access patterns

Long lock retention during complex operations

As concurrency increases, the likelihood of contention grows, especially in Enterprise NAS deployments where hundreds or thousands of clients may interact with the same storage pool.

Why Traditional NAS Architectures Struggle

Traditional storage models often rely on strict locking to maintain consistency. While this ensures data integrity, it introduces performance limitations under high concurrency.

In many NAS solutions, locks are applied at coarse levels, such as file or directory level, which means even unrelated operations may get blocked. This leads to unnecessary serialization of operations that could otherwise run in parallel.

As workloads become more dynamic, this approach fails to scale efficiently, especially in environments with high metadata activity.

Moving Toward Lock-Optimized Architectures

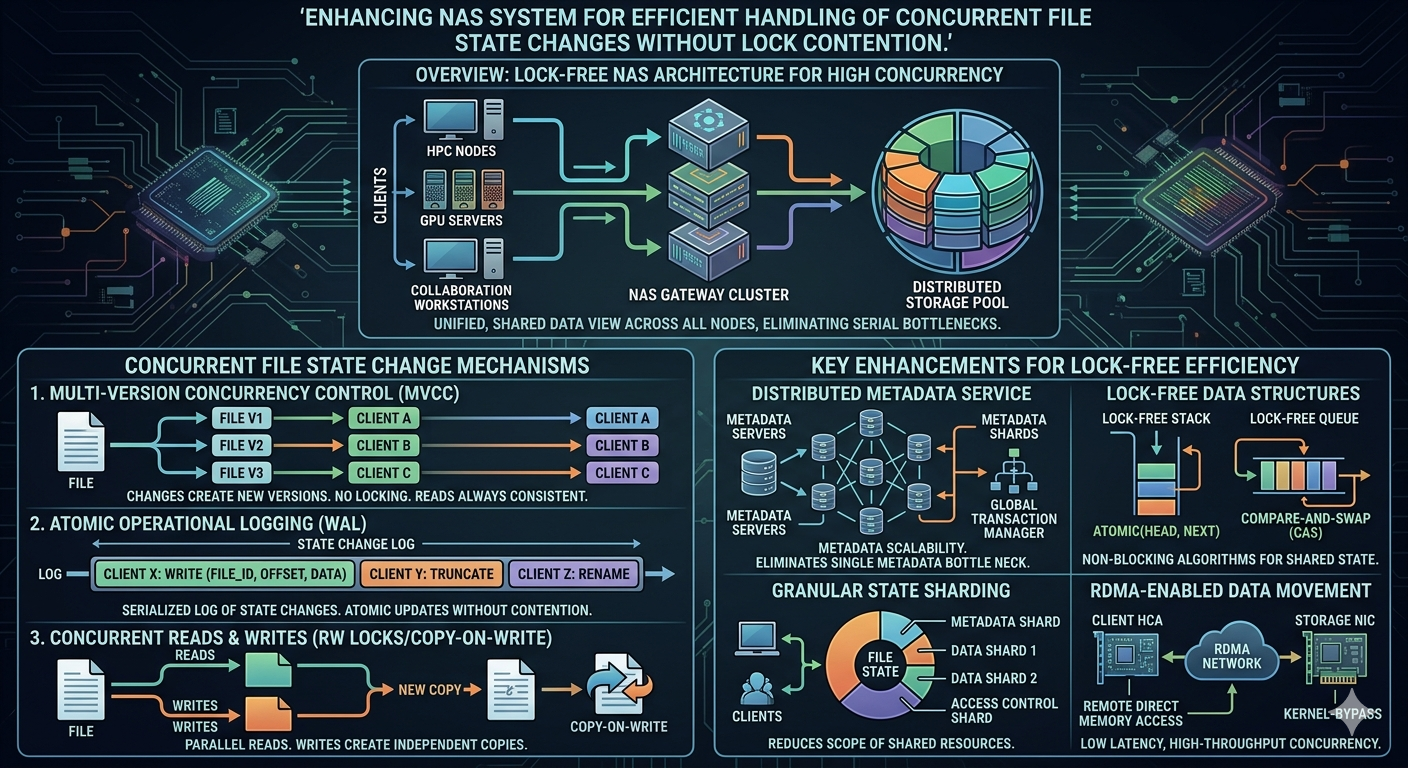

To address these challenges, modern NAS System designs focus on reducing lock dependency and improving concurrency handling. Instead of eliminating locks entirely, the goal is to minimize their scope and duration.

Key strategies include:

1. Fine-Grained Locking

Instead of locking entire files or directories, systems use smaller lock scopes. This allows multiple non-conflicting operations to proceed simultaneously.

2. Lock-Free or Lock-Light Operations

Where possible, certain metadata updates are handled using lock-free algorithms, reducing contention significantly.

3. Transactional Metadata Handling

Operations are grouped into atomic transactions, ensuring consistency without requiring long-held locks.

4. Distributed Lock Management

In Enterprise NAS environments, locking responsibility is distributed across nodes rather than centralized, reducing bottlenecks.

Role of Intelligent Concurrency Control

Modern NAS solutions increasingly rely on intelligent concurrency control mechanisms. These systems analyze access patterns and dynamically adjust how locks are applied.

For example:

Frequently accessed files may use optimized locking paths

Read-heavy workloads may bypass certain lock restrictions

Write operations may be queued intelligently to avoid collisions

This adaptive behavior ensures that lock contention is minimized without compromising data integrity.

Impact on Enterprise NAS Performance

In Enterprise NAS environments, even minor inefficiencies in concurrency handling can scale into major performance issues. Since these systems support mission-critical applications, maintaining low latency and high throughput is essential.

By improving concurrency handling, organizations benefit from:

Faster response times during peak usage

Reduced risk of system-wide slowdowns

Improved scalability for growing workloads

More predictable performance under load

This is especially important in environments running analytics, virtualization, or collaborative workloads where file state changes are constant.

Reducing Metadata Bottlenecks

Metadata operations are often the primary source of lock contention. Every file modification involves metadata updates, which can quickly become a performance bottleneck.

Modern NAS System architectures address this by:

Caching metadata closer to compute nodes

Parallelizing metadata operations

Reducing dependency on centralized metadata servers

These optimizations significantly reduce contention and improve overall system responsiveness.

Balancing Consistency and Performance

One of the biggest challenges in storage design is balancing consistency with performance. Strong consistency often requires strict locking, while high performance favors reduced synchronization.

Advanced NAS solutions achieve this balance by:

Using eventual consistency models where appropriate

Prioritizing critical operations over background updates

Applying adaptive consistency rules based on workload type

This ensures that the system remains both reliable and efficient.

The Future of Concurrent NAS Systems

As data environments continue to evolve, concurrency demands will only increase. Future Enterprise NAS architectures are expected to rely more heavily on:

AI-driven lock prediction and optimization

Real-time workload classification

Autonomous conflict resolution mechanisms

Fully distributed metadata management systems

These advancements will further reduce lock contention and enable near-seamless concurrent operations across massive storage environments.

Conclusion

Efficient handling of concurrent file state changes is essential for maintaining high-performance storage systems. Traditional locking mechanisms, while reliable, often introduce unnecessary bottlenecks in high-concurrency environments.

By adopting intelligent concurrency control, fine-grained locking, and distributed metadata management, modern NAS solutions can significantly reduce lock contention. In Enterprise NAS environments, these improvements translate directly into better scalability, higher throughput, and more stable performance.

Ultimately, enhancing a NAS System for concurrency is not about removing locks entirely, but about making them smarter, lighter, and more adaptive to real-world workloads.